Using AI Tools to Write and Review Nonprofit Website Content

Using AI Tools for Nonprofit Website Content: A Practical Guide

AI Writing Tools: What They Are and What They Aren’t

AI writing tools — ChatGPT, Claude, Gemini, and dozens of sector-specific alternatives — can generate text that sounds professional, reads fluently, and covers a topic comprehensively. For a Communications Director managing a nonprofit website with limited time and no dedicated copywriter, this looks like a solution to the content bottleneck.

It is a tool, not a solution. The distinction matters because AI-generated content for nonprofit websites carries specific risks that don’t apply to a marketing agency writing product descriptions or a tech company publishing documentation.

This guide covers how to use AI tools effectively for nonprofit website content while avoiding the governance, credibility, and accuracy risks that come with uncritical adoption.

What AI Tools Do Well for Nonprofit Content

First drafts of structured content. AI excels at generating well-organised first drafts of content types with predictable structures: programme descriptions, event summaries, FAQ sections, policy overviews, and resource guides. These drafts need editing, but they eliminate the blank-page problem that consumes disproportionate time for busy communicators.

Reformatting and restructuring. Taking existing content and restructuring it for a different format — turning a report into a web page summary, converting a Board paper into a public-facing overview, condensing a 20-page strategy document into key points for the website — is a strong use case. The AI isn’t generating new information; it’s reorganising information you’ve already produced.

SEO-informed content frameworks. AI can generate title tag suggestions, meta descriptions, FAQ structures based on common search queries, and heading hierarchies that align with search intent. These are mechanical tasks that AI handles well and that save significant time in content production.

Consistency checking. Reviewing content for tone consistency, identifying jargon that needs translating for a public audience, and flagging sections that are unclear or overly technical. AI is effective at identifying where content doesn’t match a specified style guide or audience level.

What AI Tools Cannot Do for Nonprofit Content

Verify facts or data. AI tools generate plausible-sounding text, including plausible-sounding statistics, programme descriptions, and organisational claims. If you ask an AI to write about your organisation’s impact, it will produce convincing text that may contain entirely fabricated data points. Every factual claim in AI-generated content must be verified against your own records. This is non-negotiable for nonprofit websites where credibility depends on accuracy.

Represent lived experience. Beneficiary stories, programme testimonials, and first-person accounts of organisational impact cannot be AI-generated. These are the most valuable content types on a nonprofit website precisely because they are authentic. AI-generated personal narratives are fabrication, regardless of how convincing they sound.

Make governance judgments. What should appear on the website, what should be removed, what level of detail is appropriate for different audiences, how to frame a sensitive programme area — these are governance decisions that require human judgment, organisational knowledge, and accountability. AI can suggest options; it cannot make the decision.

Guarantee originality. AI tools are trained on existing content and can produce text that closely resembles published material. For nonprofit websites, this creates a risk of unintentional plagiarism — particularly when generating content about well-documented topics where specific phrasings are common across the sector.

The Governance Framework for AI Content

If your organisation uses AI tools for website content, you need a governance framework that addresses three questions: who can use AI tools for content creation, what review process applies to AI-generated content before publication, and how AI use is disclosed (if at all).

Who can use AI tools. AI tools should be available to Communications staff as a productivity tool, not as a replacement for editorial judgment. The person using the AI tool should have sufficient subject knowledge to verify the output and sufficient editorial skill to refine it. Using AI to generate content about a programme area you don’t understand is a recipe for publishing inaccurate information.

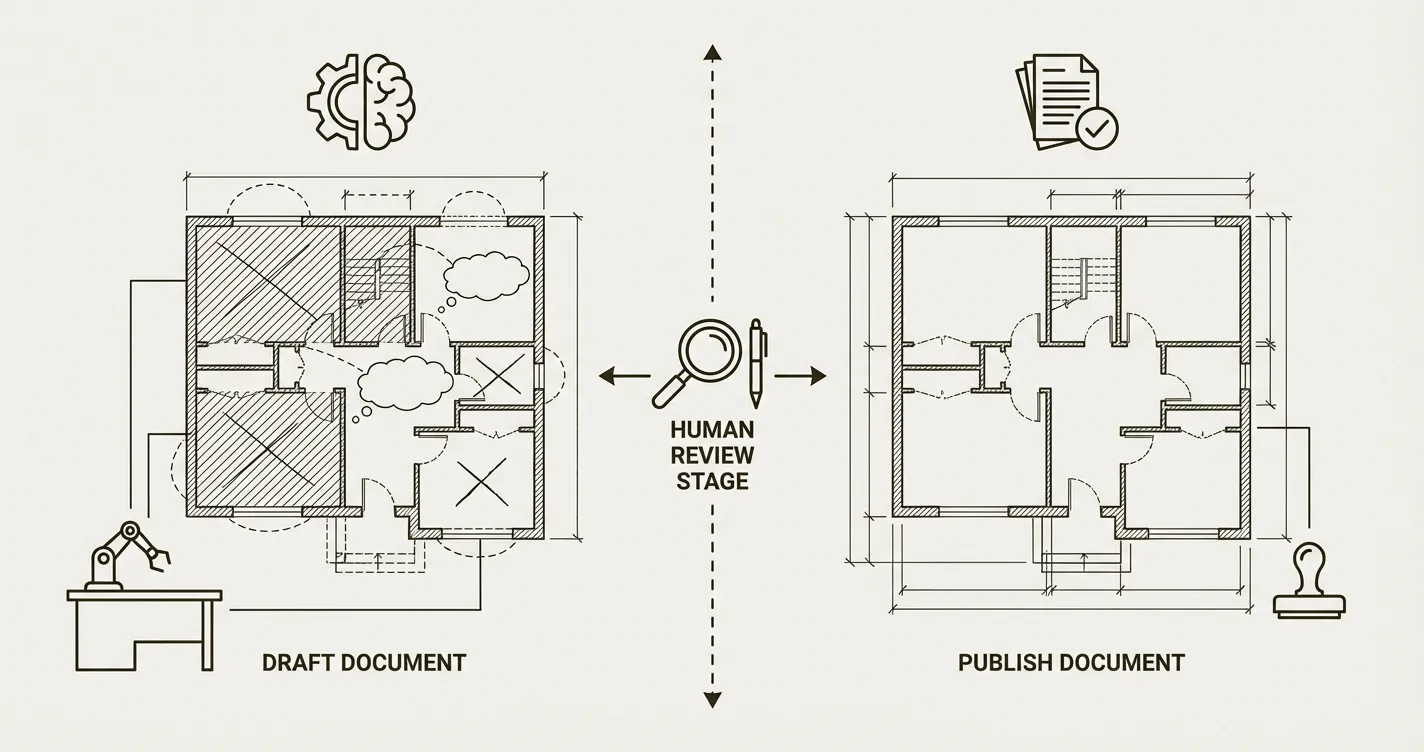

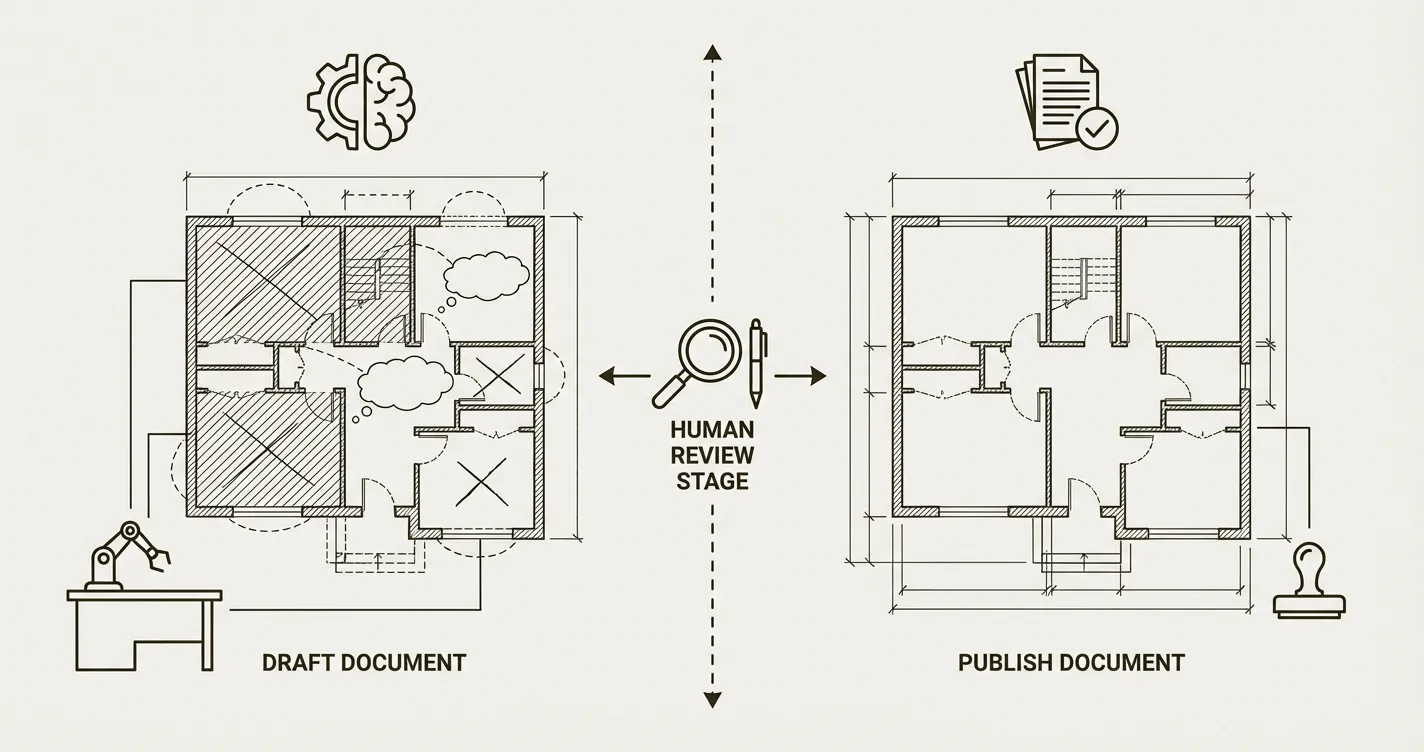

What review process applies. AI-generated first drafts should go through the same editorial review as human-written first drafts — with an additional verification step for factual claims. The reviewer should be aware that the draft was AI-assisted so they can apply appropriate scrutiny. In practice, this means: AI generates the structure and initial text, the Communications Director reviews and rewrites for accuracy and voice, and the standard approval process applies before publication.

Disclosure. There is no current legal requirement in the UK to disclose AI use in website content. However, some funders and sector bodies are developing policies on AI-generated content. The governance decision is whether your organisation discloses AI use proactively (building trust through transparency) or treats AI as an internal tool (like spell-checkers or grammar tools) that doesn’t require disclosure. Either position is defensible; the important thing is that the organisation has made a deliberate decision rather than leaving it to individual staff judgment.

Practical Workflow: AI-Assisted Content Production

Here is the workflow I recommend for Communications Directors using AI tools for Webflow CMS content:

Step 1: Brief the AI with specificity. Don’t ask for “a blog post about our youth programme.” Provide the AI with: your organisation’s actual programme description, the specific outcomes you want to highlight, the target audience (funders, beneficiaries, partners), the tone you want, and any specific points that must be included or excluded. The more specific the brief, the less rewriting required.

Step 2: Generate the draft. Use the AI to produce a structured first draft. Ask for heading suggestions, paragraph structure, and a recommended word count. If the first output isn’t right, refine the brief rather than accepting a mediocre draft.

Step 3: Fact-check everything. Go through the draft line by line. Every statistic, every programme description, every claim about impact must be verified against your own data. Replace any AI-generated data with your actual figures. Remove any claims you cannot substantiate.

Step 4: Rewrite for voice. AI text tends toward a generic, slightly corporate tone. Rewrite for your organisation’s voice. For most nonprofits, this means: simpler language, shorter sentences, more specific examples, and removing the kind of impressive-sounding but empty phrases that AI defaults to (“we are committed to making a meaningful difference”).

Step 5: SEO review. Check that the content includes your target keyword naturally, that the heading hierarchy is correct (H1, H2, H3), that the meta title and description are compelling, and that internal links to related content are included.

Step 6: Standard editorial review. The draft goes through whatever approval process your governance policy specifies — typically Communications Director review, with Executive Director or programme lead sign-off for content about specific programmes or organisational claims.

What Not to Do

Don’t publish AI output directly. No matter how good the initial output looks, it has not been verified, it doesn’t reflect your organisational voice, and it may contain errors that undermine your credibility. AI output is a starting point, not a finished product.

Don’t use AI for annual reports, impact data, or governance documents. These are the highest-stakes content on your website — the content that funders, regulators, and journalists scrutinise most closely. They must be written from verified organisational data by people who understand the context and can be held accountable for the claims made.

Don’t generate fake testimonials or case studies. This should be obvious but it’s worth stating explicitly: AI-generated beneficiary quotes, donor testimonials, or case studies are fabrication. They are the digital equivalent of making up quotes in a press release. If discovered, the reputational damage is severe and justified.

Don’t assume consistency. AI tools can produce different outputs from the same prompt. If you’re generating content for multiple pages (programme descriptions, team profiles), review the full set for consistency rather than treating each output independently.

AI and Accessibility

One area where AI tools can add genuine value is accessibility compliance. AI can review alt text suggestions for images, check whether content meets readability standards, and identify potential accessibility issues in draft content before it’s published.

However, AI-generated alt text should always be reviewed — the tool doesn’t know your organisation’s context and may describe an image in ways that miss the point of why it’s on the page. Good alt text describes the function of the image in context, not just what’s visually depicted.

For full accessibility guidance, see WCAG AA Accessibility on Webflow.

The Bottom Line

AI tools can meaningfully reduce the time a Communications Director spends on website content production — particularly for structured content types, reformatting tasks, and SEO frameworks. They cannot replace editorial judgment, verify facts, represent authentic experience, or make governance decisions.

The organisations that will use AI well are the ones that treat it as a tool within a governance framework — with clear policies on who can use it, what review process applies, and what content types are off-limits. The organisations that will damage their credibility are the ones that publish AI output without verification, generate fake testimonials, or use AI to produce content about programme areas nobody on the team actually understands.

For related guidance, see Ai search and funder discovery.

Further Reading

Eric Phung has 7 years of Webflow development experience, having built 100+ websites across industries including SaaS, e-commerce, professional services, and nonprofits. He specialises in nonprofit website migrations using the Lumos accessibility framework (v2.2.0+) with a focus on editorial independence and WCAG AA compliance. Current clients include WHO Foundation, Do Good Daniels Family Foundation, and Territorio de Zaguates. Based in Manchester, UK, Eric focuses exclusively on helping established nonprofits migrate from WordPress and Wix to maintainable Webflow infrastructure.

Not sure where your site currently stands?

A Blueprint Audit tells you exactly what needs to change — and why.

Before implementing anything new, it's worth knowing what your current site is and isn't doing for your stakeholders. The Blueprint Audit gives you that clarity in two to three weeks.

Related Resources

Using AI Tools for Nonprofit Website Content: A Practical Guide

A practical guide to using AI writing tools on nonprofit website content — what they're genuinely useful for, where human review is non-negotiable, and the governance questions your organisation needs to answer before using them.

Join our newsletter

Subscribe to my newsletter to receive latest news & updates