What AI Tools Get Wrong About Your Nonprofit — and Why It's a Governance Problem

What AI Tools Get Wrong About Your Nonprofit

The research journey for a major gift starts long before a donor contacts your organisation. A funder's due diligence begins weeks before the formal application stage. A journalist investigating your sector types your name into an AI tool between reading two other sources.

What those tools return shapes the first impression. And for most nonprofits, that impression is either vague, partly wrong, or built from information that was accurate three years ago.

This isn't primarily an SEO problem. It's a content infrastructure problem — and left unaddressed, it's a governance problem.

Why AI Tools Are Now Part of Your Stakeholder Research Journey

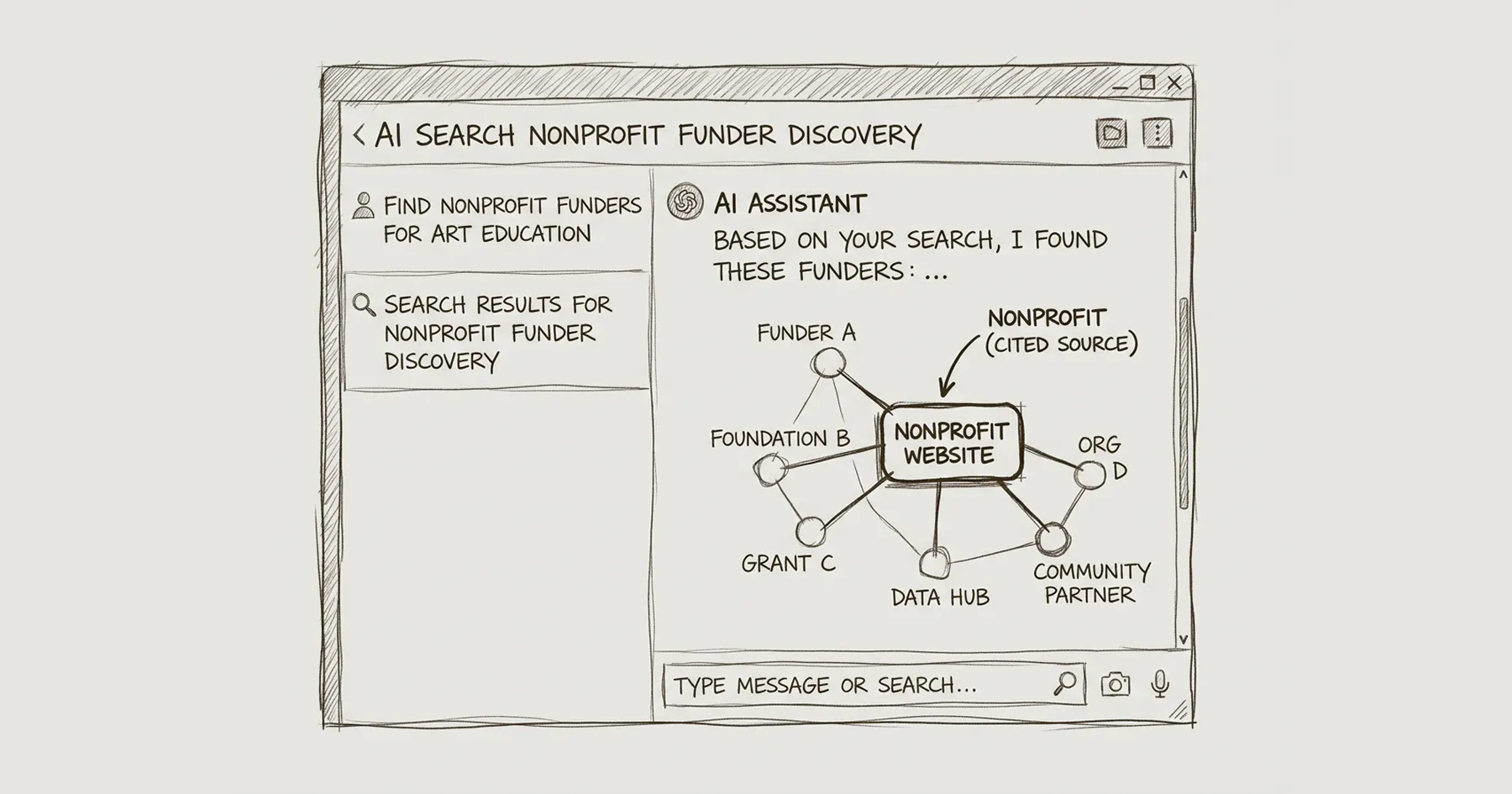

Generative AI tools — ChatGPT, Perplexity, Google's AI Overviews, Microsoft Copilot — have become a routine starting point for research. A grant officer with twenty organisations on a longlist doesn't have time to visit twenty websites in depth before shortlisting. An AI summary that provides a quick read on each organisation's focus, geography, governance, and scale is a useful filter.

The problem is that these tools synthesise from whatever they can find across the web. They don't distinguish between your current annual report and a press release from four years ago. They don't recognise when a programme has ended, a geography has changed, or a leadership team has turned over. They conflate organisations with similar names. They fill in gaps with plausible-sounding content that isn't accurate.

For commercial organisations, an imprecise AI summary is a reputational inconvenience. For a nonprofit navigating a major funding decision, a programme partnership, or a regulatory review, an inaccurate AI summary at the wrong moment is a credibility problem with real institutional consequences.

What AI Tools Actually Get Wrong About Nonprofits

I started running what I think of as an AI entity audit as part of the Blueprint Audit process — searching for clients' organisations across ChatGPT, Perplexity, and Google AI Overviews and documenting what each returns. The patterns that emerge are consistent enough to name.

Geographic scope errors. An organisation that operates across three London boroughs gets described as a "national charity." An international NGO with programmes in seven countries is described as working "primarily in East Africa." Geographic scope is one of the most commonly misrepresented attributes — partly because organisations describe their work inconsistently across different documents, and partly because AI tools generalise from partial information.

Mission conflation. Organisations that work in adjacent areas — two charities focused on youth employment in the same city, two foundations funding arts education — get their work merged or confused. The AI synthesises a description that's accurate for neither but plausible for both. If a funder is evaluating whether you fit their criteria, a conflated mission description can result in being screened out of a process you were eligible for.

Outdated leadership and governance information. AI tools have training cutoffs and don't update in real time. If your CEO left eighteen months ago, several AI tools will still name them. If your Board composition shifted after a governance review, the old composition may persist in AI summaries. For organisations where governance credibility is a condition of funding — as it is for many statutory and major philanthropy grants — presenting an inaccurate Board picture to a researcher using AI tools is an uncontrolled governance risk.

Inflated or deflated scale. "A small charity" versus "a significant regional organisation" — the categorisation AI tools apply affects how researchers contextualise everything else they read. Income figures are particularly unreliable: AI tools pick up whatever numbers appear most prominently across indexed documents, which may be a grant amount from a press release, not an annual income figure from a current accounts filing.

Programme descriptions that reflect old strategy. Organisations that have evolved their programmes significantly — as most established nonprofits have — find AI tools describing work that ended years ago. A housing charity that has moved from direct provision to systems advocacy may still be described in terms of its older service model. For funders with specific priorities, this mismatch produces incorrect assumptions about fit before a conversation begins.

Why This Is a Governance Problem, Not Just a Marketing Problem

The instinct is to file AI tool inaccuracy under "reputation management" — something the Communications team monitors and corrects. That framing understates the institutional exposure.

Consider the stakeholder groups who are now routinely using AI tools for research:

Grant officers and programme staff at foundations shortlist organisations based on partial information before engaging directly. An AI summary that places your organisation in the wrong geography, describes the wrong mission, or presents an outdated governance structure can remove you from a conversation you were eligible for — without anyone telling you it happened.

Institutional donors and major gift prospects conduct informal research before requesting a meeting or responding to an approach. If what they find through AI tools contradicts what your Development team has told them, the inconsistency itself becomes a credibility signal. Donors notice when an AI summary doesn't match the organisation they've been pitched.

Journalists and policy researchers use AI tools to contextualise organisations quickly. If a journalist covering your sector gets an inaccurate description of your work from an AI tool, that description may shape the angle of their coverage — or their decision about whether to include your voice.

Regulatory bodies and statutory funders are increasingly digitally literate. An AI summary that presents governance information inconsistent with your Charity Commission register entry is a discrepancy that, however it arose, reflects on your institutional communication.

None of these situations require the AI tool to be dramatically wrong. A small inaccuracy — a geographic scope that's slightly off, an income range that's outdated, a programme name that no longer exists — is enough to introduce doubt at a moment when you're not present to correct it.

The governance dimension is this: your organisation is being represented to high-stakes audiences by an automated system you don't control, and the quality of that representation is determined almost entirely by the quality and consistency of the content infrastructure you've built over years. If that infrastructure is weak — inconsistent descriptions across different documents, vague language on the website, key facts not published in HTML where AI tools can index them — the representation will be weak.

That's not a Communications failure. It's an infrastructure failure with governance consequences.

The Content Infrastructure Problem

AI tools synthesise from what they can find. They prioritise content that is:

- Published in HTML, not locked in PDFs

- Specific and verifiable, not vague and general

- Consistent across multiple sources

- Attributed to named, credible authors

- Structured with appropriate schema markup

- Present on authoritative external platforms (Charity Commission register, NCVO, sector directories)

Most nonprofit websites fail several of these criteria — not through negligence, but because the content was built for human readers navigating the site, not for machine systems synthesising across sources.

The gap shows up most clearly in what I'd call entity definition: the specific, verifiable description of what your organisation is, who it serves, where it operates, at what scale, under what governance structure, and with what track record. This information may all exist somewhere on your website — but if it's scattered across different pages, expressed differently in different places, or present only in PDFs and annual reports rather than in indexed HTML, AI tools will synthesise it imprecisely.

Organisations with strong entity definition — where the same accurate, specific information appears consistently across the website, the Charity Commission register, NCVO profile, LinkedIn, and any other indexed platforms — receive more accurate AI representations than organisations whose information is fragmented and inconsistent.

This is fixable. But it requires treating entity clarity as an infrastructure task rather than a copywriting task.

What a Governance-Ready Response Looks Like

The organisations navigating AI representation well are doing a few specific things:

Running an AI entity audit. Searching for the organisation by name in the major AI tools, documenting what each returns, and comparing it against what is actually true. This identifies the specific gaps — wrong geography, outdated leadership, missing programme information — that need to be addressed.

Publishing key facts in HTML, not just PDFs. Annual report summaries, governance information, income figures, programme descriptions, geographic scope — any information that a researcher might rely on should exist as indexed HTML on the website, not only as a downloadable document. PDFs are harder for AI tools to index accurately.

Maintaining consistency across external platforms. The Charity Commission register entry, NCVO profile, LinkedIn company page, and any sector directory listings should describe the organisation using the same language, the same scope, and the same key facts as the website. Inconsistency across platforms is a primary driver of AI tool inaccuracy.

Implementing Organisation schema. Structured data that explicitly defines the organisation's name, legal name, charity number, geographic area served, and primary activities gives AI tools machine-readable facts rather than requiring them to infer from prose. This is a technical implementation task — covered in detail in Schema Markup for Nonprofit Websites — but the underlying principle is about content infrastructure, not just code.

Reviewing content currency on key pages. Outdated programme descriptions, former leadership still listed, geographic scope that doesn't reflect current operations — these are the content failures that produce the most consequential AI misrepresentations. A periodic content audit that specifically checks currency on governance, leadership, programme, and impact pages is a sensible governance practice regardless of AI tools.

This Is Where Website Governance and Institutional Risk Meet

The website has always been your primary institutional communication channel. What's changed is that its content now feeds automated systems that represent your organisation to high-stakes audiences without your involvement or awareness.

That changes the governance calculus. Website content that is vague, inconsistent, outdated, or buried in PDFs carries an institutional risk that didn't exist five years ago — not because the content is wrong in ways your stakeholders can see and discount, but because it produces automated misrepresentations that your stakeholders encounter without context.

Getting this right isn't a large investment. It's a systematic one: an entity audit, a content consistency review, a schema implementation, and a cadence for keeping key pages current. These are the kinds of infrastructure tasks that the Blueprint Audit surfaces and sequences — alongside the technical and structural work that makes a nonprofit website genuinely fit for institutional purpose.

Further reading:

- Answer Engine Optimisation for Nonprofits — how to structure content so AI tools cite you accurately

- Schema Markup for Nonprofit Websites — the technical implementation that gives AI tools machine-readable facts

- Using AI Tools to Write and Review Nonprofit Website Content — the other side of the AI question: using it in your content workflow

- What Funders See When They Visit Your Nonprofit Website — the due diligence context for content accuracy

- Website Credibility Audit for NGOs — assessing your current content infrastructure

Is this familiar?

Most nonprofit websites don't fail at launch. They fail quietly, over time.

The governance gaps, the stakeholder confusion, the Board that's stopped referring people to the site — these don't announce themselves. See what the difference looks like when it's built correctly from the start.

Eric Phung has 7 years of Webflow development experience, having built 100+ websites across industries including SaaS, e-commerce, professional services, and nonprofits. He specialises in nonprofit website migrations using the Lumos accessibility framework (v2.2.0+) with a focus on editorial independence and WCAG AA compliance. Current clients include WHO Foundation, Do Good Daniels Family Foundation, and Territorio de Zaguates. Based in Manchester, UK, Eric focuses exclusively on helping established nonprofits migrate from WordPress and Wix to maintainable Webflow infrastructure.

Ready to understand your current situation clearly?

The Blueprint Audit is where we start.

A two-to-three week diagnostic that maps your stakeholder needs, audits your current site, and gives you a clear strategic brief before any implementation commitment is made. £2,500. No obligations beyond the audit itself.

In case you missed it

Explore more

AI Website Builders for Nonprofits: Why Speed Is Not the Same as Sustainability

Nonprofit Website Crisis Communication

AI Search and Nonprofit Funder Discovery

Join our newsletter

Subscribe to my newsletter to receive latest news & updates